Changing your larger-than-average MySQL table

We're still working on our redesign and are close to release. Today we started tackling an issue where the performance of the error page was too slow for us. In the end, we needed to change the structure of the error occurrences table, which is a lot harder than it seems.

This post was first published on the Flare blog.

We're still working on our redesign and are close to release. Today we started tackling an issue where the performance of the error page was too slow for us. In the end, we needed to change the structure of the error occurrences table, which is a lot harder than it seems.

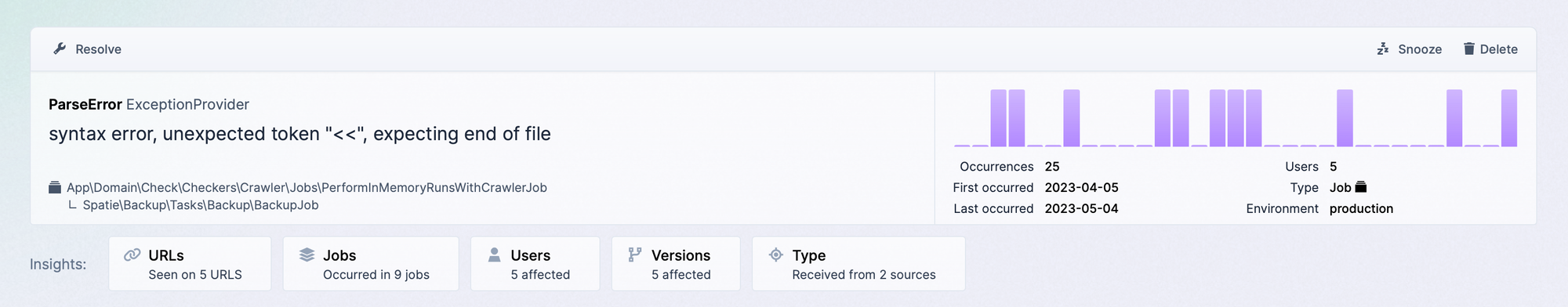

In the redesign of Flare, we've added a new feature called insights which gives you a quick overview of some statistics like which endpoints triggered the error, which users, which application versions, and so on.

The counts for these insights are always live calculated, which means queries like this:

SELECT

count(DISTINCT entry_point) AS aggregate

FROM

`error_occurrences`

WHERE

`error_occurrences`.`error_id` = ?

AND `error_occurrences`.`error_id` IS NOT NULL

AND `entry_point_type` = 'web';

Each error occurrence has an entry_point_type (web, queue, command) and an entry_point (a URL, job class, or command). These queries were quite performant on our seeded data, but in production, we have an error that has accumulated 66k occurrences. That's the point where things start to become slow.

Luckily, there's an easy solution to this, indexes! So we've added an index to entry_point_type and saw a performance boost. Later on, we tried adding an index to entry_point, but this exception popped up:

BLOB/TEXT column 'entry_point' used in key specification without a key length

Wait! Are we using a text type column for entry_point? That's a bit crazy. These columns are stored differently than varchars in MySQL, which makes them a lot slower + indexing them has some limitations. Let's fix this using a varchar column, but how many characters should it be?

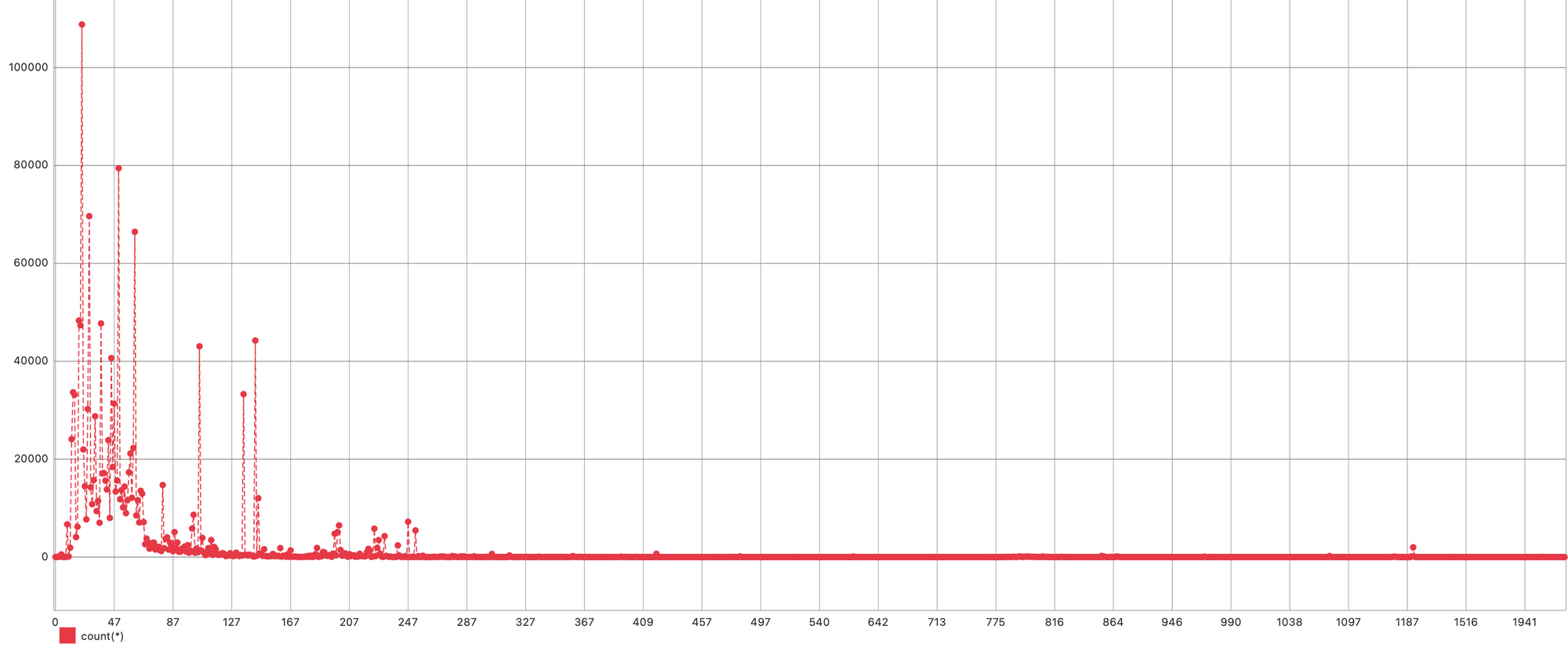

The first thing we checked was how many characters are required on average. A quick query that counted the number of occurrences for each entry_point length gave us the following:

We're pretty safe with 255 characters because almost all entry points for occurrences are around that length. Because a URL is somewhat limited to 2048 characters and varchars smartly assign space (it only takes the space required for a value), we took 2048 characters for this column.

Now changing our column can be done as such:

ALTER TABLE `flare`.`error_occurrences`

CHANGE `entry_point` `entry_point` varchar(2048) CHARACTER SET utf8mb4 COLLATE utf8mb4_unicode_ci NULL;

The thing is, this is a large table at the moment. There are 10531985 it.. 10532202 ite.. 10532278 items. You get the point. A lot of items, and the table is constantly growing.

By default, MySQL will make a copy of this table, apply the changes to that table, remove the old table, and use the newly created one instead. This process is extremely slow, and it blocks a lot of statements, completely shutting down Flare. We should avoid this at all costs.

The trick with the extra column

There are a few solutions to this problem. In our case, we did the following: we first created a new column called entry_point_2 with the correct type:

ALTER TABLE `flare`.`error_occurrences`

ADD COLUMN `entry_point_2` varchar(2048) NULL, ALGORITHM=INSTANT;

The MySQL instant algorithm will instantly add the column (it still takes some time, but no locks are required). The problem? When we ran this query, MySQL started copying our table, which was the thing we were trying to avoid.

After some research work in the MySQL documentation, we discovered that these operations will still use the copy algorithm if a fulltext index exists on the table, even when that index isn't used in the operation.

So the easy fix was to drop the fulltext index temporarily:

ALTER TABLE `flare`.`error_occurrences`

DROP INDEX `error_message_fulltext`;

Great, we can now add an extra column without too much hassle.

Moving data

Next, we move the data from entry_point to entry_point_2. This operation is going to take some time. The cool thing is we can already ensure that newly created occurrences immediately fill entry_point_2.

Doing such a thing would require some changes to the code, then deploying that code, later changing the code, deploying that code again ... too much complexity if you ask me.

MySQL has a feature called triggers, which are small hooks running before or after inserting, updating, or deleting a row. Since we only add error occurrences, an insert trigger was enough to make sure each new occurrence has its entry_point_2 set:

CREATE TRIGGER `flare`.`error_occurrences`

BEFORE INSERT ON `error_occurrences`

FOR EACH ROW

BEGIN

SET NEW.`entry_point_2` = NEW.`entry_point`;

END

Now we can update all the other entries within the table which are missing a value for entry_point_2:

UPDATE

error_occurrences

SET

entry_point_2 = entry_point

WHERE

entry_point IS NOT NULL

AND entry_point_2 IS NULL

There are probably more performant ways to this (it took a whopping 20 minutes to execute this query), but it doesn't add locks to our table and is easy to write.

Setting everything right

So we're almost there. We've got identical columns, entry_point of type text and entry_point_2 of type varchar(2048). We're going to rename these columns so that:

entry_point->old_entry_pointentry_point_2->entry_point

We can do this instantly like this:

ALTER TABLE `flare`.`error_occurrences`

RENAME COLUMN entry_point TO old_entry_point,

RENAME COLUMN entry_point_2 TO entry_point;

We remove the trigger we've created:

DROP TRIGGER `flare`.`error_occurrences`;

And in the end, altogether remove the old_entry_point column:

ALTER TABLE `flare`.`error_occurrences`

DROP COLUMN `old_entry_point`, ALGORITHM=INSTANT;

Now we can create an index on the newly created entry_point column:

ALTER TABLE `flare`.`error_occurrences`

ADD INDEX `error_occurrences_entry_point_index` (`entry_point`(255)) USING BTREE;

And, of course, we also need to create the fulltext index we removed earlier. The thing is, we can only add such an index when nothing is written to the table. To fix this, we temporarily paused Laravel Horizon:

php artisan horizon:pause

Our queues are now paused so that nothing gets written to this table. We don't lose anything thanks to Horizon because the jobs we've added still exist, and they can be executed as soon as we restart Horizon again.

Now we can add the fulltext index as such:

ALTER TABLE `flare`.`error_occurrences`

ADD FULLTEXT INDEX `error_message_fulltext` (`exception_message`);

And restart horizon:

php artisan horizon:continue

Conclusion

A minor fix turned out to be more complicated than expected, but the result is what counts. And our page now got a lot faster. We'll start inviting beta testers soon. Interested? Contact us at support@flareapp.io.